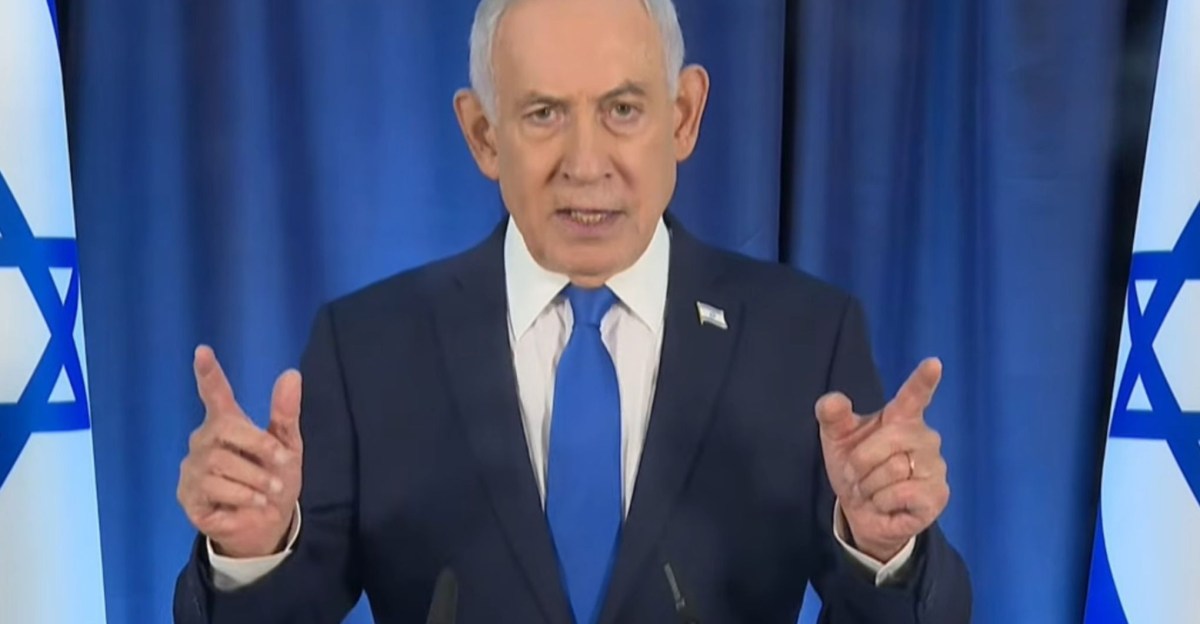

In an increasingly digital world where artificial intelligence can flawlessly mimic human likeness, Israeli Prime Minister Benjamin Netanyahu finds himself at the center of a perplexing controversy. Persistent conspiracy theories circulating on social media allege that Netanyahu has been either gravely injured or killed, subsequently replaced by AI-generated deepfakes. These claims have been fueled by video clips purportedly showing the prime minister with an extra digit or consuming from an inexplicably full coffee cup, highlighting a growing challenge in discerning reality from digital fabrication.

While concrete evidence to support claims of Netanyahu’s demise remains absent, the pervasive capabilities of AI to create believable clones across various media formats make it exceptionally difficult to definitively quash such rumors. This situation vividly illustrates the profound impact of eroding trust in visual and auditory information.

The Genesis of Digital Doubt

The deepfake speculation initially intensified following a press conference livestream featuring Netanyahu on a Friday. A segment of this broadcast quickly spread online, with users pointing to a moment where the Israeli Prime Minister allegedly displayed six fingers on his right hand. Given the historical difficulties older generative AI tools have had in accurately rendering human hands, this apparent anomaly fueled theories that deepfake technology was being deployed to conceal Netanyahu’s potential incapacitation or death following an Iranian missile strike.

However, closer examination, alongside analysis by fact-checking organizations like Snopes and PolitiFact, has largely debunked the ‘extra finger’ claim. Explanations suggest the visual inconsistency likely stemmed from video compression artifacts or poor lighting conditions. Furthermore, the video’s considerable length, nearly 40 minutes, significantly surpasses the typical duration capabilities of current AI video generation models, casting further doubt on the deepfake assertion.

Photo: theverge.com

Proof-of-Life Under Scrutiny

In an effort to counter the swirling allegations, Netanyahu released a video via his X (formerly Twitter) account. The clip featured him in a coffee shop, explicitly asking the person recording to count his fingers. Yet, this attempt at reassurance paradoxically sparked a fresh wave of skepticism. Social media users promptly highlighted what they perceived as visual inconsistencies within this new footage, suggesting it too could be an AI fabrication.

Observations ranged from the seemingly unnatural or non-depleting liquid level within Netanyahu’s coffee cup to a ring on his finger that appeared to vanish and reappear. The background environment also came under scrutiny, with a visible till allegedly displaying an outdated date from 2024. Additionally, some critics questioned Netanyahu’s use of his right hand to drink, claiming he is known to be left-handed. While many of these points could be attributed to video quality issues or misinterpretations, their cumulative effect underscores the profound challenge of authenticating content in the current digital climate.

The Wider Implications of a Trustless Digital Landscape

The difficulty in definitively verifying the authenticity of these videos highlights a critical flaw in our contemporary information ecosystem. Neither clip carried verifiable metadata from standards like C2PA Content Credentials or SynthID, which are designed to either confirm authenticity or trace the use of AI tools. Despite pledges from platforms such as Instagram and YouTube to flag AI-generated or manipulated content, none of the shared footage bore such indicators.

This crisis of trust is particularly acute amid ongoing geopolitical tensions, where the demand for verified information is paramount. The situation mirrors past incidents, such as the manipulated Kate Middleton photograph, but is exacerbated by AI’s advanced ability to produce highly convincing fakes with fewer discernible ‘tells.’ This makes it increasingly difficult to ascertain the veracity of visual media, even in the absence of clear evidence of manipulation.

The ambiguity created by AI’s capabilities is already being exploited to sow distrust. Former President Donald Trump, for instance, accused Iran of employing AI as a “disinformation weapon” to falsely portray successful attacks against the United States. Ironically, this accusation comes from an administration that has itself utilized deepfakes and manipulative content for political purposes. Trump’s subsequent warning about the dangers of AI, delivered to reporters, underscores the complex and often hypocritical landscape of digital truth in an era where even the simplest actions, like holding a coffee cup, can become subjects of intense scrutiny.