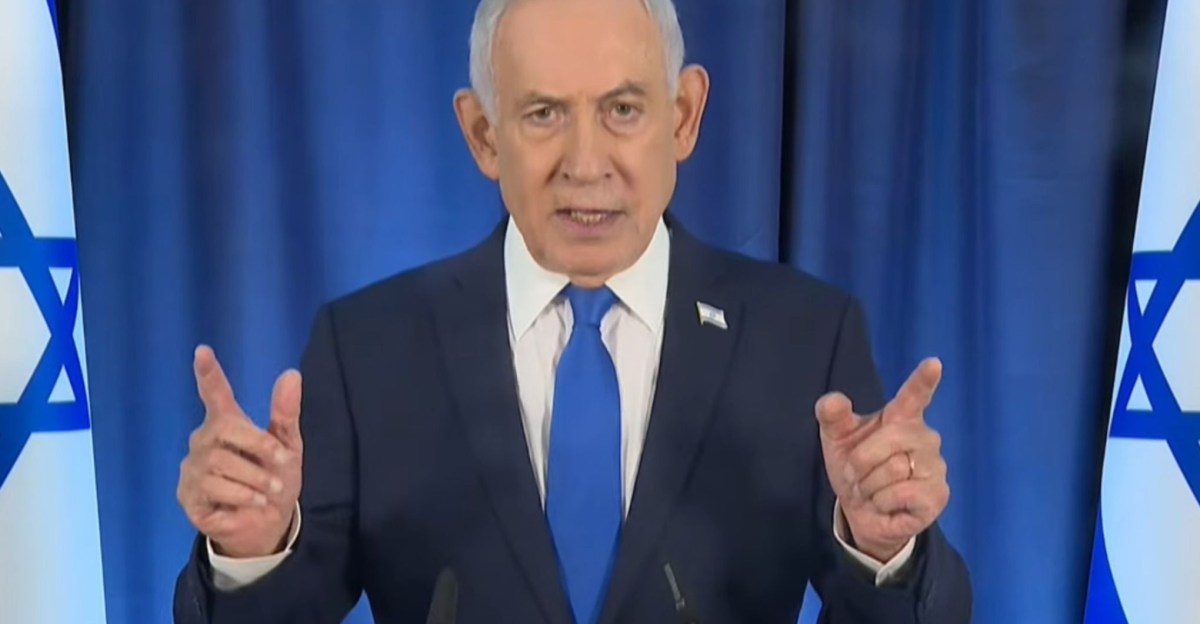

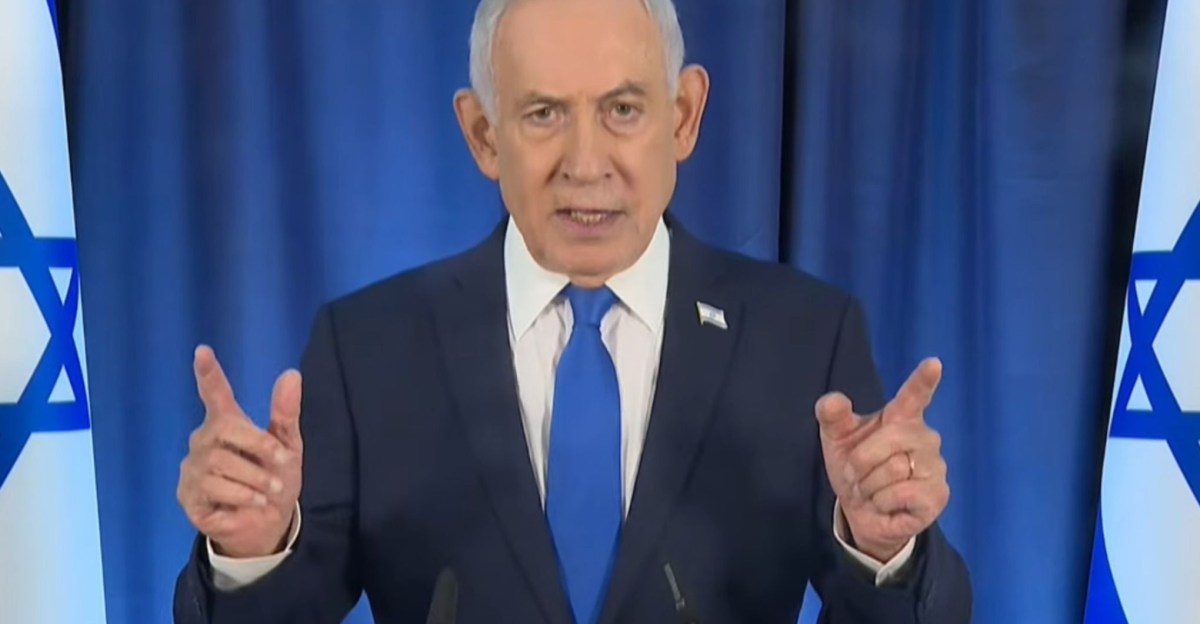

In an era increasingly defined by advanced artificial intelligence, verifying reality has become an unexpectedly complex challenge. Israeli Prime Minister Benjamin Netanyahu currently finds himself at the center of this modern dilemma, battling persistent online conspiracy theories suggesting he has been incapacitated and replaced by AI-generated deepfakes.

From social media clips purportedly showing him with an extra digit to videos where a coffee cup seems to defy the laws of physics, the sheer volume of speculative content highlights a concerning trend: the erosion of collective trust in visual evidence.

Photo: theverge.com

Photo: theverge.com

While concrete evidence to support claims of Netanyahu’s demise or replacement remains scarce, the advanced capabilities of AI in replicating human likeness across various media formats make conclusive refutations increasingly difficult. This situation starkly illustrates a world grappling with the inability to trust one’s own eyes.

The Genesis of Deepfake Claims

The wave of speculation began after a recent press conference livestream featuring Prime Minister Netanyahu. Observant social media users quickly circulated a particular clip, alleging it showed the Israeli leader with six fingers on his right hand. This supposed anomaly instantly fueled deepfake theories, especially given that earlier generative AI models were notoriously prone to errors when rendering human hands.

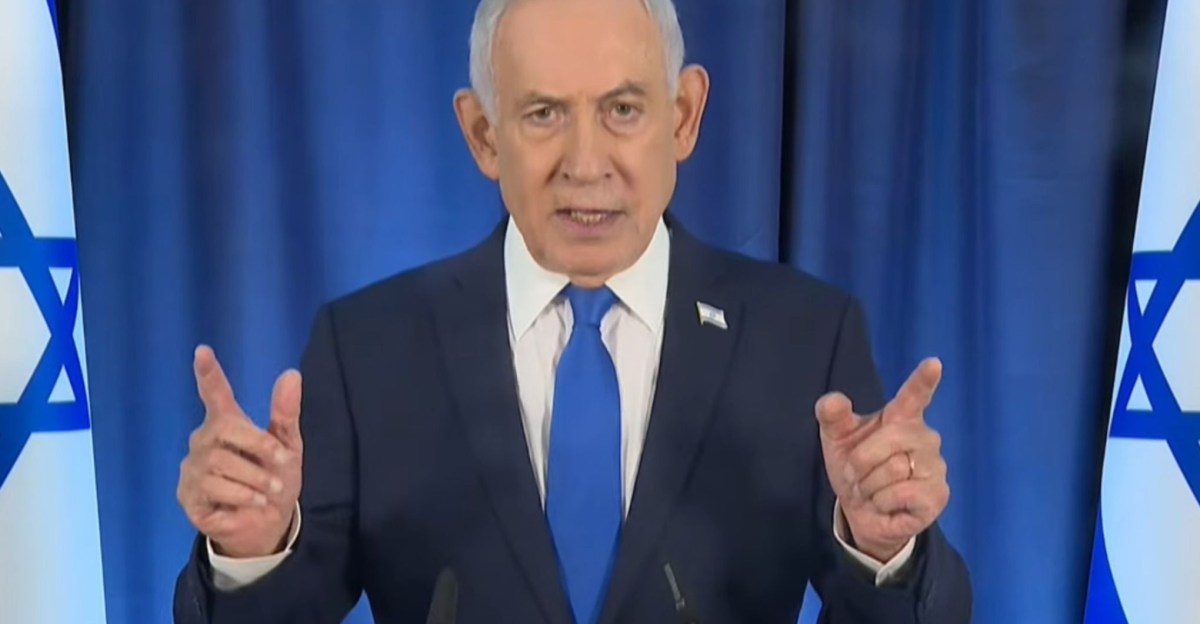

Photo: theverge.com

The underlying insinuation was that the Israeli government might be deploying deepfake technology to conceal the Prime Minister’s alleged death during an Iranian missile strike.

However, upon closer examination, the supposed “extra” finger appears to be an artifact of video compression, quality degradation, or even lighting conditions. Reputable fact-checking organizations, including Snopes and PolitiFact, swiftly debunked the AI-generated claims. Furthermore, the almost 40-minute runtime of the original press conference significantly exceeds the typical maximum length achievable by current AI video generation models, lending further weight to its authenticity.

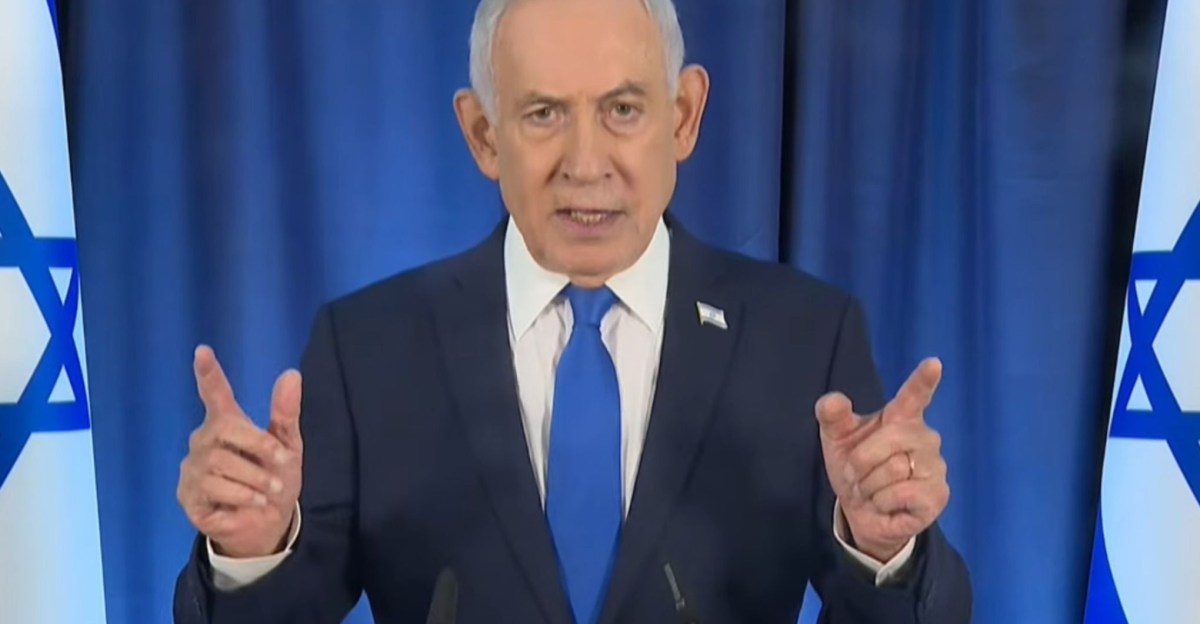

Proof-of-Life Backfires

In an effort to quash the burgeoning deepfake narratives, Prime Minister Netanyahu subsequently released a video on his X (formerly Twitter) account. Filmed in a coffee shop, the footage shows him directly addressing the camera, asking an unseen person to count his fingers. This attempt at a “proof-of-life” video, however, only served to ignite further scrutiny and fresh deepfake accusations.

Photo: theverge.com

Online commentators quickly pointed out new perceived inconsistencies within this second video. These included the seemingly unnatural movement or non-depletion of liquid in Netanyahu’s coffee cup, a ring on his finger that appeared to vanish and reappear, and an anomalous 2024 date visible on a background cash register. Some even questioned the footage based on claims that Netanyahu, generally believed to be left-handed, was shown drinking with his right hand.

As the debate intensified, the reasons for suspicion grew increasingly intricate and subjective, delving into how naturally the cup was held or even the Prime Minister’s “general aura.” This illustrates a profound challenge: in the absence of definitive verification tools, disproving deepfake claims can become an almost impossible task, regardless of how flimsy the evidence for manipulation might be.

The Broader Crisis of Digital Authenticity

A significant factor contributing to this escalating crisis of trust is the current lack of widespread, robust digital authentication technologies. Neither of the videos in question featured metadata from verification systems like C2PA Content Credentials or SynthID, which could confirm their authenticity or disclose any AI involvement. Similarly, social media platforms, despite their stated commitments to label AI-generated or manipulated content, offered no such indicators for these clips.

The public’s demand for verified information is particularly acute amidst ongoing geopolitical tensions, such as the conflict involving Iran, Israel, and the United States. The digital landscape, however, is ill-equipped to meet this demand, forcing individuals to rely on the methodologies of professional fact-checkers or simply trust the assessments of others.

This uncertainty has already been weaponized. Former President Donald Trump, in a recent Truth Social post, accused Iran of using AI as a “disinformation weapon” and advocated for severe penalties for media outlets disseminating such content. This accusation comes despite his own administration’s history of employing deepfakes and manipulative content for political purposes. The irony underscores the critical need for a consistent approach to digital truth and accountability. Until such standards are universally adopted and enforced, the simple act of holding a coffee cup can become fodder for global mistrust.